Gmail without POP Fetch

Gmail is in the process of removing the POP fetch feature from Gmail shortly (Feb 2026), if you have not already lost access to it. This has caused me to revisit a solution I implemented 10+ years ago, but never publicly documented.

You Know Forwarding is a Thing, Right?

In this announcement, Google suggests that users “set up automatic forwarding (web)”.1 This always been an option with Gmail but it doesn’t work as smoothly as you would like. POP fetching solved a huge issue, this may take a bit to explain.

Sidenote Proper Mail Server Setup

All of this is a waste unless you properly setup your mail server. Things off the top of my head that you need to do:

- A fixed IP address, it may take months to establish the reputation of your IP, so you really don’t want it to change. This process includes scouring and cleaning your IP from blacklists.

- SPF DNS Entries

- DKIM DNS entries and message signing

- DMARC DNS entries

- Forward using Sender Rewriting Scheme

When you enable forwarding Gmail scans those forwarded messages for spam. I hear you say, of course it does, I want that. Sure, but in some instances if the message is spammy enough Gmail will reject the delivery of that message:

<**********@gmail.com>: host gmail-smtp-in.l.google.com[192.178.163.26] said:

550-5.7.1 [35.165.231.100 12] Gmail has detected that this message is

550-5.7.1 likely unsolicited mail. To reduce the amount of spam sent to

Gmail, 550-5.7.1 this message has been blocked. For more information, go to

550 5.7.1 https://support.google.com/mail/?p=UnsolicitedMessageError

41be03b00d2f7-c6e52fc8ca4si32799293a12.45 - gsmtp (in reply to end of DATA

command)

This will cause your MTA to bounce the email all the way back to the potential spammer, which may leak your gmail address to them. Not great, not awful. You likely didn’t want the spam anyways, and Gmail is pretty good about spam filtering (although not perfect). However, the mere fact that your mail server forwarded this spammy message means that Gmail may decrease the reputation of your mail server. Awful. Do it enough times and you will get bounce like this:

host alt1.gmail-smtp-in.l.google.com[142.250.97.26] said: 421-4.7.28 [redacted IP address] Our system has detected an unusual rate of 421-4.7.28 unsolicited mail originating from your IP address. To protect our 421-4.7.28 users from spam, mail sent from your IP address has been temporarily 421-4.7.28 rate limited. Please visit 421-4.7.28 https://support.google.com/mail/?p=UnsolicitedRateLimitError to 421 4.7.28 review our Bulk Email Senders Guidelines. d25si107020vsk.333 - gsmtp (in reply to end of DATA command)

Really awful!

Worse? You may not even know this is happening. These messages will appear in your mail logs, who reads those? These messages will also appear in the bounced message that is sent back to the spammer. So in all likelihood, you may not know this is happening. You first indication may be mail that is delivered slowly or legitimate emails bouncing back to senders because Gmail thinks your mail server is spammy.

In short, forwarding may work for you, or you may only think forwarding is working for you.

Be Less Spammy

I can hear you say ‘then just don’t forward spammy messages to Gmail.’ To which I respond, then don’t send me spammy messages!

But seriously, the answer is clearly to stop spammy messages before they get forwarded to Gmail. The best roll your own mail server solution that I know of for this is SpamAssassin. Yup, it still works … mostly.2

So, you can setup your MTA to reject messages above a certain SpamAssassin level. That should solve the Gmail reputation issue. Except, now you have another issue, or at least I do.

SpamAssassin isn’t perfect, it misclassifies (not a word but it should be) things in both directions. You could probably find a happy middle ground, but still, I am not comfortable with the possibility that email messages may just be discarded. When you are stuck waiting for that stupid 2-Factor email code a small voice will nag in the back of your head, did SpamAssassin delete that email on me?

How did POP Fetching Solve this?

Finally, 500 words in and now we hit the f#&*ing point of this post!3

Well, instead of rejecting spammy messages, we deliver them locally to the user spam_email and then Gmail would fetch those messages over the POP protocol. The cool thing was that Gmail would still spam process them, and would move things incorrectly marked spam into your inbox and only put Spam in the Spam folder.

Cool.

It was cool, for many years. It had its drawbacks but it worked.456

But Alas, POP Access is no More

I can see Google’s perspective here. This is a weird feature to maintain. But given the small uproar that has occurred and the amount of time that Google kept this arcane feature alive, it seems that this was used by more people than you would imagine.

IMAP to the Rescue

Honestly, this is the real point of the post. My workflow above remains largely the same, except now I use imapsync to push the mail to Gmail rather than a POP pull.

Benefits

This has benefits, well one, namely I now control the frequency of when this happens. This is better, kinda of. Previously if you clicked the refresh icon ![]() Gmail would force a pull from the POP accounts right then. Although, you could only do this once ever 10ish minutes.

Gmail would force a pull from the POP accounts right then. Although, you could only do this once ever 10ish minutes.

Drawbacks

No more spam filtering by Gmail. So, I now put everything into the Spam folder. This is a bit of a bummer, I raised my spam threshold a bit to decrease the false positives.7

The End

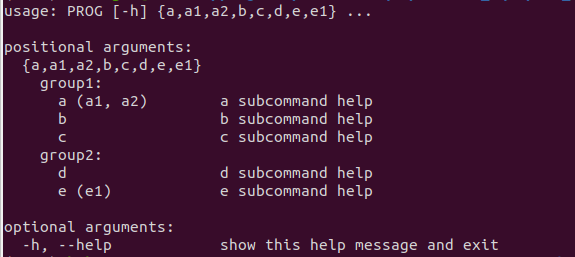

That is pretty much it. The workflow looks like:

- Postfix receives mail, routes it to SpamAssassin

- SpamAssassin scores the email, and routes it back to Postfix

- If spammy, Postfix redirects it to local account email_spam.

- Otherwise it forwards it on to Gmail using SRS forwarding

- A cronjob runs every 5 minutes and uses IMAPSync to push messages in email_spam to Gmail/Spam

- Yes, I realize just signing over my mail duties to gmail is an option. But I personally don’t want to do that, I have other uses of my mail server besides just my personal email. Plus, I believe that currently costs about $10/month and likely more in the future. But if it works for you, that is certainly easier. ↩︎

- I am not the person to discuss all the tweaks you can do with SpamAssassin, that is a long path and I am sure there are many improvements I could make. ↩︎

- Comically, no, this is still not the point of this post. ↩︎

- The main drawback being that it only refreshed these POP accounts about every 20 minutes. But, you could have up to 5 POP accounts. Gmail would then check one account every 4ish minutes, it wasn’t always perfect, but it generally worked that way. If you map 5 account names to the same user in Dovecot, which I did, then your POP account was refreshed 5 times faster than everyone else’s. ↩︎

- A second footnote? Yeah, the prior note was getting a bit long. The other oddity, was that Gmail would at times refuse to download some of the messages from the POP account. These were mostly messages containing viruses, so it wasn’t a big deal. Plus these messages would just get left behind on the server, so you could ssh in and use mutt to read them in a pinch if something went wrong. ↩︎

- A third footnote … come on! Well this was less a Gmail problem than a me issue. For a while I had a hard time getting certbot to properly restart Dovecot after updating the SSL cert. The POP access would silently fail in Gmail (I said less a Gmail problem) unless you looked in the settings page where it would have a big red banner. Sometimes when this happened I would have mail delayed for weeks. When I reconnected it, you would then immediately see what types of messages SpamAssassin has false positives for. The best solution ended up being a forced reload of Dovecot every night at 2am, maybe not ideal, but super easy. ↩︎

- I do have a trick for teaching the Bayesian filtering spam and ham that helps. If I get the impulse maybe I will write that up sooner than 4 years from now. ↩︎